A little bit more than a year ago I actively started trying out Azure as an alternative to EC2. I have to admit; I wasn’t overly impressed. Setting up an instance which was in the same league as an EC2 counterpart took more work, and that machine was more expensive to run.

You can check out details here:

Tableau Server: I wanna go fast on Windows Azure.

Can Tableau Server go faster on Windows Azure now?

I’m just about finished creating a presentation for the Tableau Conference which focuses on running Tableau on EC2, and I just couldn’t resist checking in with Azure one more time. And yes, this is a shameless plug for my session

I’m not going to get into as much detail as I normally do in order to keep my powder dry for the presentation itself. I did want to throw something out here since it’s been a long time between posts, however.

Testing

During testing, I used pretty much the same workload as the product team did for their work on the Tableau Server 9 scalability whitepaper. I did not copy their approach lock, stock and barrel, but the results of using their workbooks allows me to give you an apples-and-pears comparison vs. one that is apples-and-pianos.

As always, your results will vary based on your workload. Don’t read these numbers and take them as gospel and/or fact. Do your own testing.

I stood up (the equivalent of) two 8 core machines for each test. They looked like this:

Note I’m not doing anything fancy in terms of switching around processes to try and optimize results…and that’s the whole point. What might your “out of the box” experience be like on these platforms?

Can we do better in terms of the number of users these machines can support and how fast dashboards are rendered? Yup, sure can. But that is not what this entry is all about.

If you want to learn other things to try, you might just want to consider coming to my Tableau Conference session. Whoops! Another plug! Sorry about that. Can’t help myself.

Disk

- On all machines, I installed the OS on C: and Tableau on D:

- In all environments, I gave D: 1500 IOPS

To get my IOPS, I leveraged EBS Provisioned IOPS on EC2. On Azure, I used Premium Storage.

Azure

Azure keeps it really simple in terms of pricing out Premium storage, and I like that:

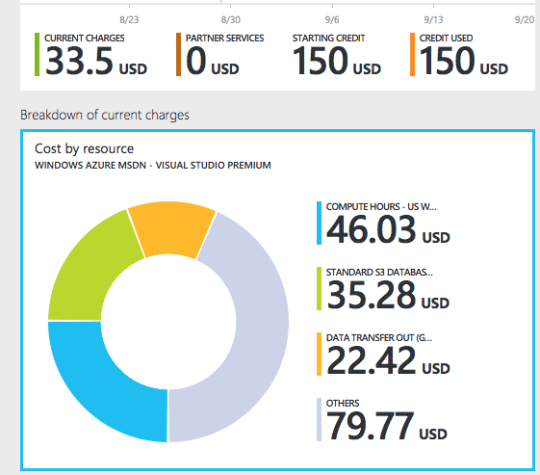

I also love how Azure keeps billing simple and shows me a quick viz which tells me exactly how much $$ I’ve spent thus far:

…even if this thing is a doughnut chart..ahem.

I’d love to see this same high-level summary of charges in AWS – you can get the same information, but it takes a bunch of clicks and reading.

Anyway, To get to 1500 IOPS, I needed 3 of the P10 disks above, striped as a single volume. That’ll cost me about $60/month, and points off for making me stripe disks at the OS level.

Why didn’t I just use standard storage?

I tried…and found that even when I striped 3 disks my IO was just not consistent. Several times attempts to publish “big” (extract laden) workbooks failed because (it seemed) some other app in my zone stole IO from me…one of the disks would start showing a huge queue (100+) and Disk Transfer/sec would shoot through the roof. This didn’t happen on Premium storage.

BTW: keep in mind that in previous blogs I DID use standard storage and it worked as long as I used more disks. I figured I might as well use Azure’s “Cadillac” offering just to see what would happen this time around, however.

AWS

I provisioned a single 100 GB disk for D: with 1500 EBS Provisioned IOPS. Here’s what the cost might look like as determined by http://calculator.s3.amazonaws.com/index.html

About $110/month, or almost double that of Azure. But, this assumes I actually need provisioned IOPS to get my work done. Do I? Might a cheaper gp2 disk be OK? To find out…well, you know…see you at TC.

Mano a Mano

I tested 4 types of machines:

EC2:

C3.4xlarge (16 vCPU / 8 Cores, 30 GB RAM): $1.504 / hour, ~$1101 / mo

C4.4xlarge (16 vCPU / 8 Cores, 30 GB RAM): $1.546 / hour, ~$1131 / mo

Azure:

DS13 (8 cores, 56 GB RAM): $1.45 / hour, ~$1080 / mo

G3 (8 cores, 112 GB RAM): $2.68 / hour, ~$1994 / mo

(prices for Azure come from: http://azure.microsoft.com/en-us/pricing/details/virtual-machines/)

You’re going to get more RAM on the D13 than on either EC2 instance at a lower cost… But you’re also getting Xeon v2 CPUs (vs. v3 on C4s) at a lower frequency (than either the C3 or C4).

30 GB of RAM is frankly low for Tableau Server, but we’re generally going to bottleneck on CPU first anyway, so I went with it.

The G3 is obviously “costy” compared to the C4, but the G3 gives you more RAM and comparable (Xeon v3) CPU performance.

…and now the moment you’ve all been waiting for. For each machine I ran a four-ish hour test during which I generated load for between 10 to 250 virtual users. I increased load by 10 users every 10 minutes.

DS13 & C3

The C3 is the clear leader. (NOTE: Since the Azure testing was an itch I decided to scratch at the last moment, you’ll see that I refer to the C3 instances as “baseline” in the color legend. These are c3.4xlarge instances and I ran the same test twice for most of the work I did on EC2)

We see:

- Higher TPS on the C3

- Lower Response time on the C3

- Lower Error Rate on the C3

Let’s zoom in a bit, shall we?

I’m getting about 110-120 virtual users off the C3 and only 70 off the DS13. Response time is also ~2x on the DS13.

Conclusion: The DS13 probably doesn’t qualify as “go fast” compared to EC2 but I might consider it for a “go fast enough” scenario where I don’t have tons of concurrent users…and that RAM is pretty sweet.

G3 & C4

These are the big boys. Check it out:

Note how things have changed a bit:

- The G3 hangs in there when it comes to low response time (sure, after the machine completely breaks down it is much worse than the C4, but by that point we’re only talking about different degrees of unacceptable performance)

- The C4 still has a better error rate, but the G3 gives us much more runway that the DS13

But, I’m still able to handle more concurrent users on the C4, so game to EC2.

Conclusion: Azure can now “go fast” with the new-to-me G-Series. but at a potentially higher cost than the C4….and I gotta say, the Azure portal is pretty fun to work in….Slower, but more fun 🙂

Isn’t the D drive the “ephemeral” drive that gets wiped out if you stop and re-start your server?

That’s dependent on how you configure the machine. For me, D: was a permanent drive.

Any opinions on the Azure H-Series?

Hey Kevin –

I haven’t tested the H’s yet, but I’d think the H8 & 16 (cost aside) would be very good instances. No idea around pricing on these: I assume they’re pretty expensive?

The one thing that could make them less flexible is their “once an H, always an H” limitation. I’m sort of a miser, so I like to intially build out my clusters on cheap / low-end isntances. Then, once I have everything installed and all my data restored, I change them to the beefier “production” instance type and size. Can’t do that here since you can’t switch an H to a DSv2 and back again. There are some scenarios (like having a Primary node with no other services running on it) when 8 cores (minimum H config) could be overkill: You’d still want a DSv2 for one of those.